Free Speech Vs. The Internet’s Garbage: Why Reddit Needs To Be Intolerant Of Intolerance

Reddit has had ten years to test the waters on whether or not free speech works as a company policy, and the numbers are in: it doesn't.

Following a month long stream of controversy that would make the Westboro Baptist Church blush — a controversy which we may have gotten in on — Reddit performed the ultimate moral blue-balling earlier this month, and announced that rather than removing their racist, sexist, and/or otherwise bigoted content, they would be keeping it behind a wall from now on.

On July 16, the site announced it would be cracking down on “communities whose purpose is reprehensible“. But instead of the much-rumoured cull of the offending forums (known as subreddits) — an outcome that was perhaps implied by labelling them as “having no place on Reddit” — management assured the public that these offensive parts of the site would be segregated from the public site, demanding users sign in to access them. These parts of the site will be free of advertisement, as they “don’t want to profit off them“. And only one forum has been banned so far: a three-year-old board by the name of ‘Raping Women’ — which, yes, is all about what it says in the title.

Surviving the cull are various havens for hate speech and bigotry whose names aren’t direct threats — including misogynistic groups, Gamergaters, and white supremacist hang-outs in particular. In fact, the amount of supremacists on the site has gotten so bad that nearly every thread you find in the ‘Videos’ and ‘Today I Learned’ forums has a copy of the Stormfront mantra attached in the comments — a propaganda text used by neo-Nazis to recruit youths (You can normally pick it out pretty easily; just find the comment where someone capitalises the W in white).

The solution, to a casual observer, might seem simple: just shut down the hate groups. But for the administration, it becomes more complicated.

Reddit has dug itself into a hole in the last ten years, and there seems to be no logical escape without severe and damning compromise. They dug that hole with a shovel called ‘free speech’.

Free Speech Means Fuck You

Everyone who knows Reddit is more than simply ‘where cat pictures are’ knows that the site claims to be the hub of absolute free speech. That’s not millennial hyperbole talking: the creators and more than one CEO have described the site as a “bastion of free speech”. Even the site’s prevalent suggestion that users make their own forum lists one of the reasons to do so as “because you love freedom”.

It’s an outlook that Reddit’s management has stuck to through thick and thin, only deleting posts that have been created with the intent to attack others on the site (such as in June, when it shut down popular forum ‘Fat People Hate’ for attacking overweight users), or that could present legal problems (such as in the case of ‘The Fappening’, a forum dedicated to posting the various leaked celebrity nudes). And even that second point is up for debate considering some of the content that gets posted to the site’s underbelly, like the blatant promotion of the sale of drugs through the forum ‘Dark Net Markets’.

For the most part the administration hands off full control of the forum to volunteer moderators, and only steps in when Rome is burning. And you know what? It’s fine to have a political statement be the company’s mission statement, and if we lived in a perfect world that wouldn’t be an issue. But this isn’t a perfect world — and when you apply a laisse faire attitude to a four million–strong, largely young male audience, you essentially create a primordial stew for racists, sexists, homophobes, and Nazis.

As Reddit got larger, it began to gain more and more grotesque appendages. The men’s rights movement, a branch of anti-feminism, has exploded in popularity thanks in no small part to its presence on Reddit, which changed its status from ‘weird almost-cult thing’ to something large enough for a major network sitcom to build a plot around.

Meanwhile, the ‘chimpire’ (sorry, vommed a little at typing that) — a network of white supremacist forums strewn throughout the site — have lumbered onwards since their conception in 2009, bringing together the type who would celebrate a domestic terrorist walking into a church and shooting nine black people, so they can holler and cheer and focus on how to convince more people of the truth in their 14 words. And the scary thing is that it works. Reddit has been declared by the Southern Poverty Law Centre, a non-profit organisation which tracks down hate groups, as the largest neo-Nazi recruiting ground on the internet.

This Isn’t The First Time They’ve Got Into Trouble

The problem with giving these types of violent people absolute free speech and a platform to shout it from has already come up on Reddit, back when the community decided to ask rapists for their side of the story. The thread (massive warning on that link), which was posted in July 2012, received thousands of replies, many from actual rapists going into detail of the crimes they had committed, and many more sympathising with the rapists, declaring – by way of some truly Olympian mental gymnastics — that they weren’t really bad people.

Five days after the first thread appeared, an emergency psychiatrist explained to the community that retelling these stories to a new audience reinforces the rapist’s actions, and in some cases gives them the same sick pleasure as they felt during the act — making such a discourse quite dangerous. Moderators of that particular forum then stepped in and ‘nuked’ the thread, removing every single post to make sure that no more danger could come of it — which in all fairness worked as well as it could. While the damage had already been done, the removal of these posts destroyed any shred of reinforcement that a future rapist may get finding the thread again; it also denied these groups one place to congregate, which would have been great if Reddit hadn’t already provided for that community… but small steps deserve recognition.

Reddit still provides a place for dangerous people to talk, and to encourage each other to think and do heinous things, but the answer this time must come from the administration instead of from the moderators. To the administration, though, removing the hatred that gains power from attacking and dehumanising others, even if it reinforces harmful ideals to be practiced off the site — well, that’s against absolute free speech, isn’t it?

The times a complete forum ban has occurred (as opposed to simply deleting an offending thread, like the ‘ask a rapist’ example) has been when it disrupted the site’s functions, through acts such as doxing (revealing a user’s real life identity in an attempt to threaten them) and vote manipulation (pushing content up higher on to Reddit’s front page, through use of bots or alternate accounts), or when paedophilic content was being shared through a subreddit — and even in that case, back in 2011 with the ‘Jailbait’ subreddit, the site waited until they suspected an actual paedophile ring was set up and trading private pictures before doing anything about it. The forum was one of the site’s largest communities for two or three years, and the administration defended it tooth and nail with the very same free speech defence.

The same thing happened again two years later, with photos being shared without consent — this time featuring celebrities instead of kids. The admins continued to spout that same ‘free speech’ line, until the ship went down with the threat of a lawsuit. The site has now issued new harassment rules which are still lacking in that they target forums, not users, and only combat direct blatant physical threats of violence to a single person through the website. Even so, some folk are furious at the lack of absolute free speech these regulations entail.

How Has Reddit Still Not Got This Right?

None of this has happened in a vaccuum, and Reddit’s reputation isn’t exactly fantastic right now. When people think of Reddit the words “free speech” aren’t what springs to mind; what springs to mind are phrases like “men’s rights”, “nonconsensual nude photos” and “racist haven”. Reddit has had ten years to test the waters on whether or not free speech works as a company policy, and the numbers are in: it doesn’t. It doesn’t work in a business sense, since — despite consistent celebrity endorsements, through their AMAs — ad space is still empty and the site is still in the red; and it doesn’t work in a moral sense, as this article has hopefully proven.

Reddit has no obligation to keep this content, and it isn’t doing the site any favours. Keeping up hate speech that no other private enterprise would tolerate isn’t an applaudable move; it’s a dangerous one. Giving hateful ideals a place where they can foster and build a community based on them, and defending that under the banner of free speech, is recklessly naive at best. But after all this time, it seems unlikely that the site will change.

The hole they have dug themselves in isn’t even that deep — as they’ve proven in the past, it isn’t hard to purge a thread or remove a subreddit that violates the forum’s rules. But it’s as if the company just doesn’t want to climb out. With the announcement that would simply be flagging material and putting it behind a membership wall, Reddit’s administration has signed off on every piece of hate that goes through the site. They have stared straight at the most vile parts of Reddit’s underbelly — the organised racism, sexism, and abuse — and decided that, in the name of free speech, it’s fine.

With that in mind, let’s take a look at what has now been deemed worthy of an ad-free spot on the so-called ‘front page of the internet’:

–

C**n Town

Membership: >20,000

A haven for the disgusting ilk that is the white supremacist, this forum’s users come together to discuss such joyous and not at all aggressive topics as how black and Jewish folk are damaging the human race, and how great the confederate flag is.

The forum – moderated by a Redditor who goes by the moniker DylannStormRoof, named after the man accused of being behind the recent Charleston Church shooting — is now promoting itself as being ad free (alongside a despicable caricature of a Jewish man, asking users to donate to Reddit in gratitude for harbouring them). The current CEO named it as one of the forums that will not be banned, as it does not breach the new rules of harassment.

When the real Roof’s manifesto was unearthed a few months back, the forum celebrated, one user chanting ”one of us, one of us”, and others wondering whether or not he indeed was one of them and used the forum too.

–

The Red Pill

Membership: >100,000

A misogynistic Pick-Up Artist forum that preaches to push through any “last minute resistance”to sex, and whose voices were heavily reflected in the manifesto of Costa Ilsa shooter Elliot Rodger.

This forum advises users to treat women as “the most responsible teenager in the house”, and lists dog training books as suggested reading to grow your relationship.

–

Cute Female Corpses

Membership: >4000

As the name suggests: a place where users post and comment on pictures of dead women that they think are “cute”. Comments are always necrophilic, often misogynistic and at times paedophilic, particularly in the case of the thread concerning a 14-year-old Brazilian girl. One particular user was pissed that such a “hot piece of ass” would “take themselves out of the gene pool”.

Contains links to other forums ‘Raping Women’, ‘Pics of Dead Kids’, and ‘Female Entrails’. One of the highest posts currently is questioning what will happen if they are banned. This might be the worst thing I’ve seen online.

–

Kotaku in Action

Membership: >40,000

You remember the harassment movement that went by the hashtag of #GamerGate? This is where they organised after being booted from 4chan.

These days, it’s little more than a place for complaining about vaguely leftist media, but the impact it had on the lives of the women it targeted proves it can be dangerous if it remains unchecked.

–

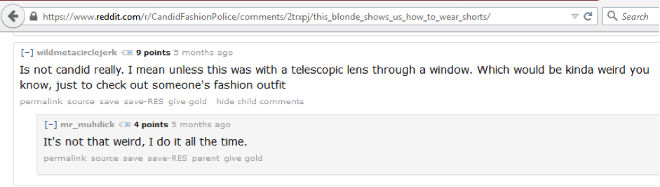

Candid Fashion Police

Membership: >50,000

After the ‘creepshots’ forum was taken down in 2012, due to a real world mess involving Gawker posting the information of one the subreddit’s moderators, this is where users started posting their non-consensual pictures of random women in public.

Conspiracy

Membership: >330,000

Contains a near-constant flow of anti-Semitic posts, included a stylised picture of the Reddit mascot ‘Snuu’ as Hitler on their page late last year. Encouraged its users to stalk a day care centre as it apparently had suspiciously high-up windows. (Actions from the users included, but were not limited to: knocking and watching the building for hours at a time during business hours; pretending to be postal employees to gain entrance; taking photos of the children inside, with the intention to post them on the site).

The community as a whole once told a Holocaust revisionist that he didn’t hate Jews enough, and was therefore a ‘shill’.

–

B.Spence is a freelance writer currently located in Melbourne. You can find her on twitter @beee_spence